A little bit of backstory here. I remember trying to get my hands on the 12 GB variant of the GeForce RTX 3080 for testing, which was a bit of a mess at the start of this year (2022). But given the present global chip shortage and high enthusiasm around cryptocurrencies (at the time), I kind of suggest my friends skip the 12 GB variant and wait for the new series. So imagine my surprise when during the GPU Technology Conference (GTC) 2022 event, a new GeForce RTX 4080 (12 GB) was announced. But unlike GeForce RTX 3080 (12 GB), there were so many things wrong with this one.

Once the stats were revealed, the controversy followed. Eventually, on October 14, Nvidia said that it will be postponing or scrapping the release of the 12 GB RTX 4080 owing to concerns created by the nomenclature, with the launch of the 16 GB RTX 4080 staying unchanged. So many users were initially skeptical about getting a new RTX 4080 altogether. But after using the product for a while now, let me tell you it is every bit of the performance powerhouse we hoped for. And it would be a shame to miss out on this amazing product due to the confusion caused by the naming scheme.

In NVIDIA’s words “The RTX 4080 12GB is a fantastic graphics card, but it’s not named right. Having two GPUs with the 4080 designations is confusing. So, we’re pressing the ‘unlaunch’ button on the 4080 12GB”. But thankfully RTX 4080 16GB is finally here, and now there is no more confusion in the naming. You get just 1 variant, the 16 GB one. So if you still have any doubt about whether to buy or wait out this one, let me use this review to tell you why RTX 4080 is must buy to meet all the current AAA games’ demands.

Nvidia RTX 4080 FE Review

The TL;DR version is that Nvidia’s GeForce RTX 4080 Founders Edition is a potent 4K GPU, but it’s also a bit pricey at $1199. Hardcore gamers will undoubtedly switch to the more powerful RTX 4090 for $1599 if they are looking for the best in the market right now. Nevertheless, for $400 less, it is a modern success and a strong challenger in this segment, and in my opinion a bang for your buck when compared to last year’s GeForce RTX 3080 Ti, which launched at the same $1199 price point.

During the GTC event, NVIDIA promised up to 4x faster than the 3080 Ti. They managed to pull this off with the help of 16GB of superfast G6X memory, and the incredibly effective “Ada Lovelace” architecture (honoring the English mathematician), which offers an exponential performance boost and AI-powered visuals. It’s interesting to note that this is the first architecture from Nvidia to contain a first and last name. For rasterized games, the GeForce 40 series is up to 2x faster and it’s 4x faster for ray-traced games.

Also, in theory, the Nvidia GeForce RTX 4080 is a significant upgrade over the RTX 3080 from the previous iteration. The number of CUDA cores increases from 8,704 to 9,728. This, together with the increase in boost speed to 2,505 MHz, results in a theoretical performance of about 49 TFLOPs.

With all of this capacity, both content creators and gamers will be able to take use of cutting-edge platform features like neural rendering, 3D rendering, video editing, visual design, and ultra-high frame rates. According to a statement provided by NVIDIA “This massive advancement in GPU technology is the gateway to the most immersive gaming experiences, incredible AI features, and the fastest content creation workflows. These GPUs push state-of-the-art graphics into the future”.

Design

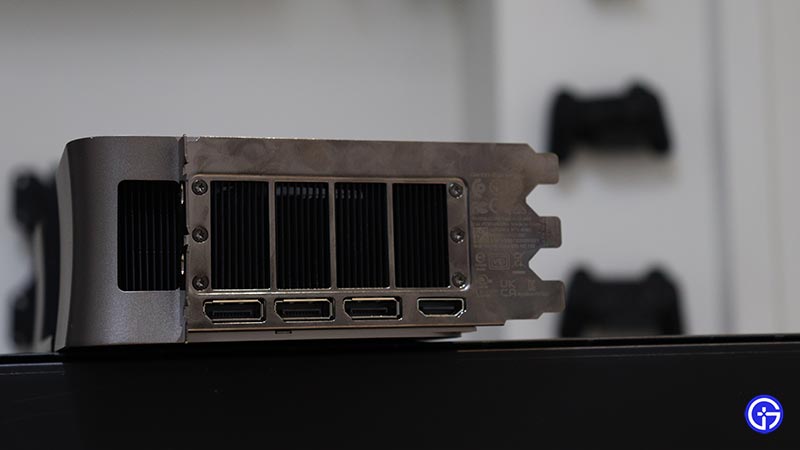

This time, Nvidia decided to give the RTX 4080 FE the same hefty triple-slot heatsink that was given to the RTX 4090. The Nvidia RTX 4080 Founder’s Edition resembles the RTX 4090 Founder’s Edition in terms of look. The same eye-catching style with sharp corners and straightforward, flowing lines is present, suggesting that it will fit into even fewer PC cabinets.

Yes, it’s laughably large, but when you attach a vast cooler designed for a 450W GPU to one that sips 320W, the end result is the coolest, quietest, and most composed high-end reference design we’ve ever tested. On the hand, it has a rather stiff and substantial feel. Although it might not appeal to everyone, it is at least a little unusual.

The 4080 symbol that is engraved on the front of the two cards is the sole variation between them from any angle. The fact that Nvidia looks to be putting the 4090’s cooler on the 4080 isn’t necessarily a bad thing if you’re worried about keeping your card cool. However, it can be an issue if you are limited by space.

The Age of 12VHPWR

Many people questioned if the 40 series would be the very first PCI Express 5 GPUs available to consumers, in the months prior to the debut. It was a real question regarding whether present PSUs would continue to be compatible with the new series of graphics cards given that ATX 2.X power supplies are not designed to handle the increased power surges that may accompany the new architecture. We now know that the RTX 4090 and 4080 lack PCIe 5.0’s high power excursions. Nevertheless, utilize the 12VHPWR power connection.

When the ATX 3.0 specs were published in February 2022. It came with the brand-new 16-pin 12VHPWR connection, which can provide graphics cards with up to 662 W of power. That is the 16-pin (12VHPWR) connection for PCI Express graphics cards, which has 12 power pins and 4 contact pins with a maximum output power of 662 W (12V, 9.2A).

Like the RTX 4090, the Nvidia RTX 4080 uses a new input adaptor to link three 8-pin connections to its proprietary 12VHPWR port. These incorporate data lines for components to negotiate power capabilities with the PSU so they do not draw more power than the PSU is capable of delivering. The specification also has more strict requirements for handling spikes.

However, there’s no need to fear because RTX 4090 and 4080 cards, whether they are Founders Edition versions or board partner cards, come with an adaptor that converts up to four 6+2-pin PCI Express power connections into a single 12VHPWR connector. This means that everyone may start using a new RTX 40 series card and an appropriate power supply right now without the need for an extra accessory. The sole factor to take into account is the amount of electricity needed.

RTX & DLSS 3 Upgrades

If NVIDIA didn’t provide any significant advancements in ray tracing, this wouldn’t be a new GPU this year. You can observe how much a single frame can be delivered by a specialized ray tracing core. Now that NVIDIA has included the usage of the RT Cores and again used DLSS on the Tensor Cores, you can play games at a speed that is actually very astonishing when compared to earlier versions.

The most cutting-edge platform for ray tracing and AI technologies, which are transforming how we play and create, is NVIDIA RTX. To produce realistic visuals with lightning-fast speed or cutting-edge new AI technologies like NVIDIA DLSS and NVIDIA Broadcast, over 250 popular games and applications employ RTX. The new norm is RTX.

Ray tracing, which mimics how light interacts in reality, is unleashed in all its splendor by the NVIDIA Ada Lovelace architecture. You may enjoy stunningly realistic VR content unlike ever before thanks to the brilliance of GeForce RTX 40 Series and third-generation RT Cores.

How DLSS 3 Changes Everything?

Performance is dramatically increased by the innovative advancement in AI-powered graphics known as DLSS. DLSS 3 employs AI to produce extra high-quality frames and is supported by the new fourth-generation Tensor Cores and Optical Flow Accelerator on GeForce RTX 40 Series GPUs.

A new generation Optical Flow Accelerator (OFA) found in Ada Lovelace generation RTX GPUs is used by DLSS 3.0. The new OFA outperforms the OFA that was previously built into Turing and Ampere RTX GPUs in terms of speed and accuracy. As a result, the RTX 4000 Series is the only one that supports DLSS 3.0.

DLSS 3 uses an optical-flow frame creation method to enhance DLSS 2.0. The DLSS frame creation technique creates a new frame that seamlessly transitions between two generated frames from the rendering process. As a result, one extra frame is produced for each frame that is displayed.

It improves performance by producing more frames using AI. To produce more high-quality frames, DLSS examines consecutive frames relevant to the new Optical Flow Accelerator in GeForce RTX 40 Series GPUs.

By employing artificial intelligence to produce higher-resolution frames from lower-resolution inputs, DLSS 3 increases efficiency for all GeForce RTX GPUs. In order to rebuild native-quality pictures, DLSS samples a number of lower res image data and incorporates motion information and feedback from earlier frames.

With more than 200 games and applications already using DLSS, including popular independent titles like Deep Rock Galactic and major AAA titles like Cyberpunk 2077 and Marvel’s Spider-Man Remastered, the technology is revolutionizing the video game industry.

RTX 4080 vs RTX 4090 vs 30-series GPUs

Instead of individually telling the “%” of extra CUDA Cores & Clock values, here is a table to compare all the reference models of GPUs you might find interesting:

| GPU Engine Specs: | GeForce RTX 4090 | GeForce RTX 4080 | GeForce RTX 3090 Ti | GeForce RTX 3090 | GeForce RTX 3080 Ti | GeForce RTX 3080 | RTX 3070 Ti | RTX 3070 | RTX 3060 Ti | RTX 3060 | RTX 3050 |

| NVIDIA CUDA Cores | 16384 | 9728 | 10752 | 10496 | 10240 | 8960 / 8704 | 6144 | 5888 | 4864 | 3584 | 2560 / 2304 (1) |

| Boost Clock (GHz) | 2.52 | 2.51 | 1.86 | 1.7 | 1.67 | 1.71 | 1.77 | 1.73 | 1.67 | 1.78 | 1.78 / 1.76 (1) |

| Base Clock (GHz) | 2.23 | 2.21 | 1.67 | 1.4 | 1.37 | 1.26 / 1.44 | 1.58 | 1.5 | 1.41 | 1.32 | 1.55 / 1.51 (1) |

| Memory Specs: | GeForce RTX 4090 | GeForce RTX 4080 | GeForce RTX 3090 Ti | GeForce RTX 3090 | GeForce RTX 3080 Ti | GeForce RTX 3080 | RTX 3070 Ti | RTX 3070 | RTX 3060 Ti | RTX 3060 | RTX 3050 |

| Standard Memory Config | 24 GB GDDR6X | 16 GB GDDR6X | 24 GB GDDR6X | 24 GB GDDR6X | 12 GB GDDR6X | 12 GB GDDR6X / 10 GB GDDR6X | 8 GB GDDR6X | 8 GB GDDR6 | 8 GB GDDR6 / 8 GB GDDR6X | 12 GB GDDR6 / 8 GB GDDR6 | 8 GB GDDR6 |

| Memory Interface Width | 384-bit | 256-bit | 384-bit | 384-bit | 384-bit | 384-bit / 320-bit | 256-bit | 256-bit | 256-bit | 192-bit / 128-bit | 128-bit |

| Display Support: | GeForce RTX 4090 | GeForce RTX 4080 | GeForce RTX 3090 Ti | GeForce RTX 3090 | GeForce RTX 3080 Ti | GeForce RTX 3080 | RTX 3070 Ti | RTX 3070 | RTX 3060 Ti | RTX 3060 | RTX 3050 |

| Maximum Resolution & Refresh Rate (1) | 4K at 240Hz or 8K at 60Hz with DSC, HDR | 4K at 240Hz or 8K at 60Hz with DSC, HDR | 7680×4320 | 7680×4320 | 7680×4320 | 7680×4320 | 7680×4320 | 7680×4320 | 7680×4320 | 7680×4320 | 7680×4320 |

| Standard Display Connectors | HDMI(2), 3x DisplayPort(3) | HDMI(2), 3x DisplayPort(3) | HDMI(3), 3x DisplayPort(4) | HDMI(3), 3x DisplayPort(4) | HDMI(3), 3x DisplayPort(4) | HDMI(3), 3x DisplayPort(4) | HDMI(3), 3x DisplayPort(4) | HDMI(3), 3x DisplayPort(4) | HDMI(3), 3x DisplayPort(4) | HDMI(3), 3x DisplayPort(4) | HDMI(3), 2x DisplayPort(4) |

| Multi Monitor | up to 4(5) | up to 4(5) | 4 | 4 | 4 | 4 | 4 | 4 | 4 | 4 | 4 |

| HDCP | 2.3 | 2.3 | 2.3 | 2.3 | 2.3 | 2.3 | 2.3 | 2.3 | 2.3 | 2.3 | 2.3 |

| Card Dimensions: | GeForce RTX 4090 | GeForce RTX 4080 | GeForce RTX 3090 Ti | GeForce RTX 3090 | GeForce RTX 3080 Ti | GeForce RTX 3080 | RTX 3070 Ti | RTX 3070 | RTX 3060 Ti | RTX 3060 | RTX 3050 |

| Length | 304 mm | 304 mm | 313 mm | 313 mm | 285 mm | 285 mm | 267 mm | 242 mm | 242 mm | ||

| Width | 137 mm | 137 mm | 138 mm | 138 mm | 112 mm | 112 mm | 112 mm | 112 mm | 112 mm | ||

| Slot | 3-Slot (61mm) | 3-Slot (61mm) | 3-Slot | 3-Slot | 2-Slot | 2-Slot | 2-Slot | 2-Slot | 2-Slot | ||

| Thermal and Power Specs: | GeForce RTX 4090 | GeForce RTX 4080 | GeForce RTX 3090 Ti | GeForce RTX 3090 | GeForce RTX 3080 Ti | GeForce RTX 3080 | RTX 3070 Ti | RTX 3070 | RTX 3060 Ti | RTX 3060 | RTX 3050 |

| Maximum GPU Temperature (in C) | 90 | 90 | 92 | 93 | 93 | 93 | 93 | 93 | 93 | 93 | 93 |

| Graphics Card Power (W) | 450 | 320 | 450 | 350 | 350 | 350 / 320 | 290 | 220 | 200 | 170 | 130 |

| Required System Power (W) (4) | 850 | 750 | 850 | 750 | 750 | 750 | 750 | 650 | 600 | 550 | 550 |

| Required Power Connectors | 3x PCIe 8-pin cables (adapter in box) OR 1x 450 W or greater PCIe Gen 5 cable |

3x PCIe 8-pin cables (adapter in box) OR 1x 450 W or greater PCIe Gen 5 cable |

3x PCIe 8-pin cables (adapter in box) OR 450W or greater PCIe Gen 5 cable |

2x PCIe 8-pin (adapter to 1x 12-pin included) |

2x PCIe 8-pin | 2x PCIe 8-pin | 2x PCIe 8-pin | 1x PCIe 8-pin | 1x PCIe 8-pin | 1x PCIe 8-pin | 1x PCIe 8-pin |

| Technology Support: | GeForce RTX 4090 | GeForce RTX 4080 | GeForce RTX 3090 Ti | GeForce RTX 3090 | GeForce RTX 3080 Ti | GeForce RTX 3080 | RTX 3070 Ti | RTX 3070 | RTX 3060 Ti | RTX 3060 | RTX 3050 |

| Ray Tracing Cores | 3rd Generation | 3rd Generation | 2nd Generation | 2nd Generation | 2nd Generation | 2nd Generation | 2nd Generation | 2nd Generation | 2nd Generation | 2nd Generation | 2nd Generation |

| Tensor Cores | 4th Generation | 4th Generation | 3rd Generation | 3rd Generation | 3rd Generation | 3rd Generation | 3rd Generation | 3rd Generation | 3rd Generation | 3rd Generation | 3rd Generation |

| NVIDIA Architecture | Ada Lovelace | Ada Lovelace | Ampere | Ampere | Ampere | Ampere | Ampere | Ampere | Ampere | Ampere | Ampere |

| Microsoft DirectX 12 Ultimate | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

| NVIDIA DLSS 3 | Yes | NVIDIA DLSS 3 | NO | NO | NO | NO | NO | NO | NO | NO | NO |

| NVIDIA Reflex | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

| NVIDIA Broadcast | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

| PCI Express Gen 4 | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

| Resizable BAR | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

| NVIDIA GeForce Experience™ | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

| NVIDIA Ansel | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

| NVIDIA FreeStyle | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

| NVIDIA ShadowPlay | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

| NVIDIA Highlights | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

| NVIDIA G-SYNC | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

| Game Ready Drivers | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

| NVIDIA Studio Drivers | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

| NVIDIA Omniverse | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

| NVIDIA GPU Boost™ | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

| NVIDIA NVLink™ (SLI-Ready) | No | No | Yes | Yes | – | – | – | – | – | – | – |

| Vulkan RT API, OpenGL 4.6 | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes |

| NVIDIA Encoder (NVENC) | 2x 8th Generation | 2x 8th Generation | 7th Generation | 7th Generation | 7th Generation | 7th Generation | 7th Generation | 7th Generation | 7th Generation | 7th Generation | 7th Generation |

| NVIDIA Decoder (NVDEC) | 5th Generation | 5th Generation | 5th Generation | 5th Generation | 5th Generation | 5th Generation | 5th Generation | 5th Generation | 5th Generation | 5th Generation | 5th Generation |

| CUDA Capability | 8.9 | 8.9 | 8.6 | 8.6 | 8.6 | 8.6 | 8.6 | 8.6 | 8.6 | 8.6 | 8.6 |

| VR Ready | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | Yes | – |

Verdict

In the words of Jensen Huang (President of NVIDIA), “Moore’s Law’s dead. And the ability for Moore’s Law to deliver twice the performance at the same cost, or at the same performance, half the cost, every year and a half, is over. It’s completely over, and so the idea that a chip is going to go down in cost over time, unfortunately, is a story of the past”.

Based on the GeForce RTX 40-series price, it appears that Nvidia was extremely convinced with the product’s value and performance. But consider whether you’ll be able to utilize an RTX 4080 to its fullest extent given your other components will not bottleneck it. If you could, then know that the card neither the card nor the fans even remotely generate any heat.

It is speedier and has a tonne of additional functions, making it a clear improvement over the prior cards. The RTX 4080’s sole significant drawback is its contrast with the 4090. It’s rather obvious that Nvidia significantly reduces the best metrics of RTX 4090 to create an RTX 4080. It is also $400 cheaper than the 4090. But if you have the option of purchasing the 4090 for $400 extra, is it fair to forgo the 4080? It all boils down to your needs and your budget. Hardcore gamers will undoubtedly switch to the more powerful RTX 4090 for $1599 if they are looking for the best in the market right now. Nevertheless, for $400 less, it is a modern success and a strong challenger in this segment, and in my opinion a bang for your buck when compared to last year’s GeForce RTX 3080 Ti, which launched at the same $1199 price point.

For e-sport enthusiasts, RTX 4080 gives the best value-to-performance ratio for all 1080p monitors with high refresh rates. Oh, and did I forget to mention that RTX 4080’s max display resolution support is 4K at 240Hz or 8K at 60Hz, with DSC? It is undeniable that the GeForce RTX 4080 is quick. Yes, it is significantly less powerful than the RTX 4090. However, the functionality is strong and the energy consumption is acceptable. Contrary to cards from the previous generation, it has no trouble maintaining the desired 60 frame rates at 4K res with all the options turned up to max, even in demanding games like Cyberpunk 2077.